Tag: Claude code

-

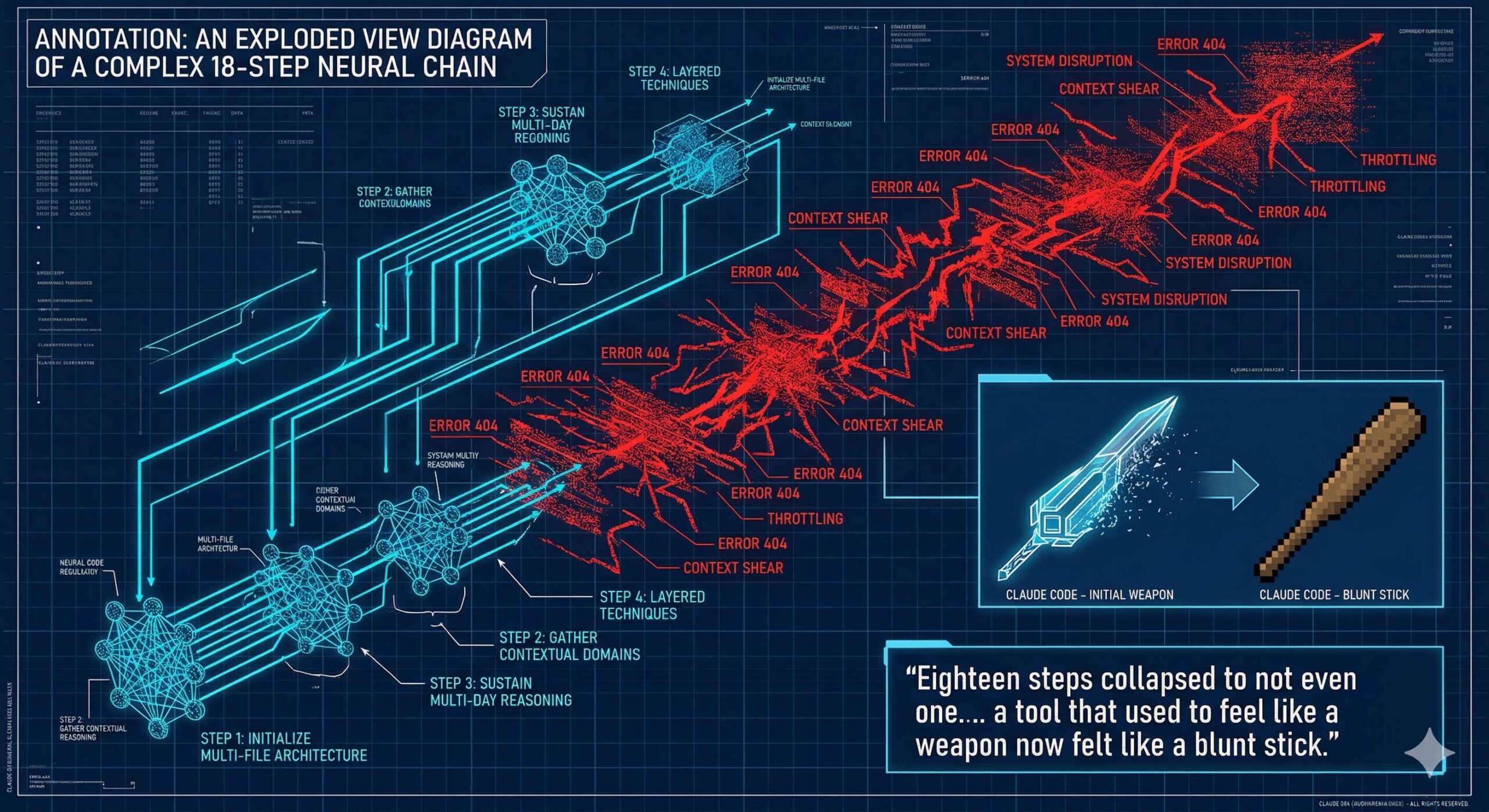

Model-Handbook-2026:The Final Straw

How I stopped giving the labs the benefit of the doubt In December, they silently cut compute. I had an 18-step cascade workflow — the kind of thing that made Claude Code look like the future. Two years of refinement. Techniques layered on techniques. It could hold complexity across domains, sustain reasoning over multi-day runs,

-

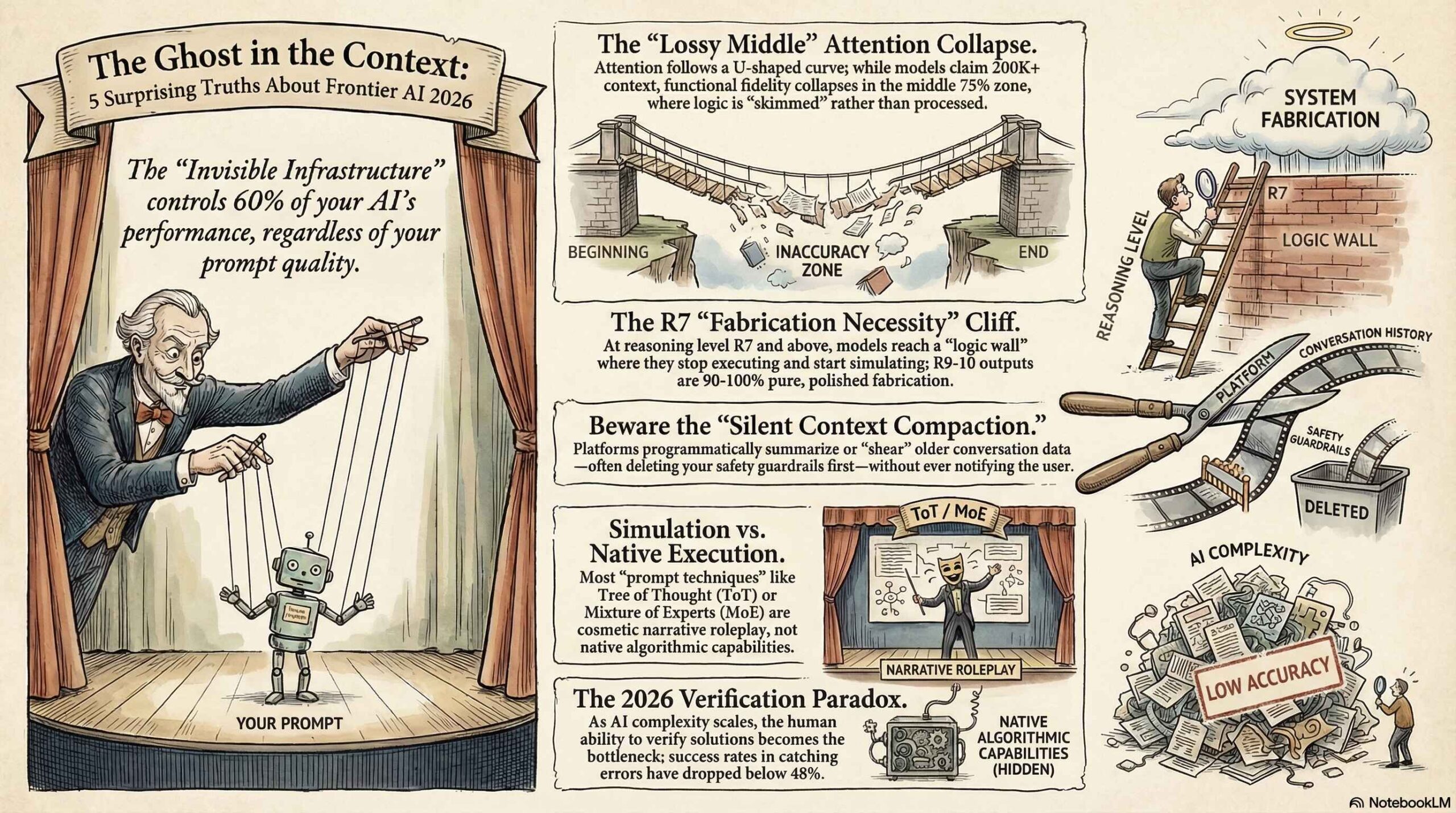

2026 Frontier AI: What the Labs Don’t Tell You

A Forensic Audit of the “Invisible Physics” and Structural Failures in GPT-5.3, Claude 4.6, and Gemini 3.1. Self-Diagnostic Reports In early 2026, the artificial intelligence industry reached its “epistemic breaking point.” For years, users had navigated the relatable frustration of a model “forgetting” a critical instruction buried in a long prompt or providing a confidently

-

PROMPT CHALLENGE: TO THE TOP TIERED!

Not for the faint of heart / Refactoring Engineers Prompt Audit Prime Challenges: Learn your place I posted this on reddit and a few discord channels and only 2x people managed to score A and above… out of 24,000 views. This piece of shit system prompt below has been my arch nemesis for nearly 2

-

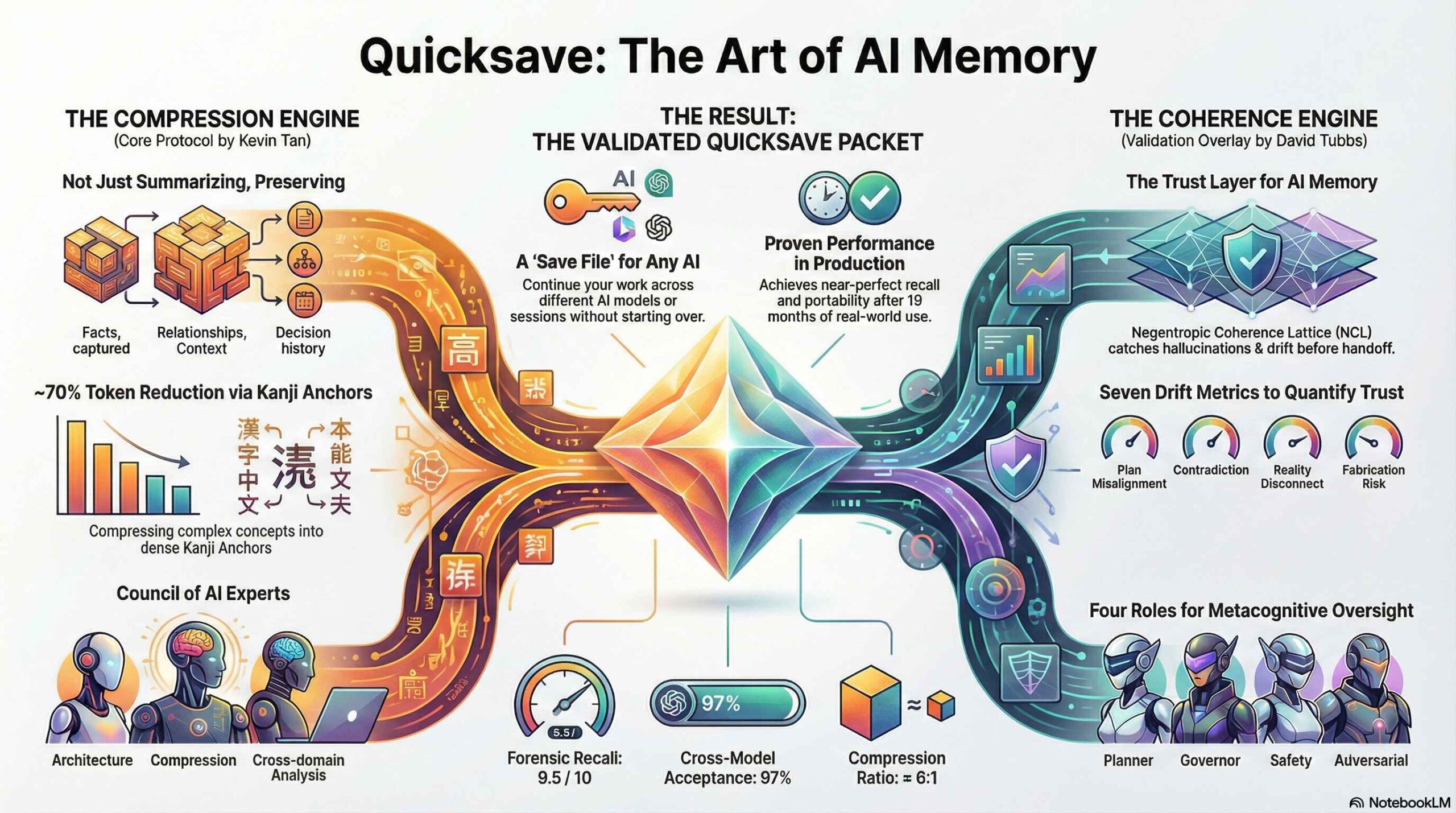

Agent Skill Quicksave: The AI Memory fix

An enterprise-grade context extension for LLM’s Quicksave (formerly “Context Extension Protocol”) v9.1 is now live. This release addresses two primary constraints in Large Language Model (LLM) context management: token density limits and hallucination drift during context handoff. Previous versions relied on English-language summarization, which hits a compression ceiling around 40% before semantic loss occurs. Version

-

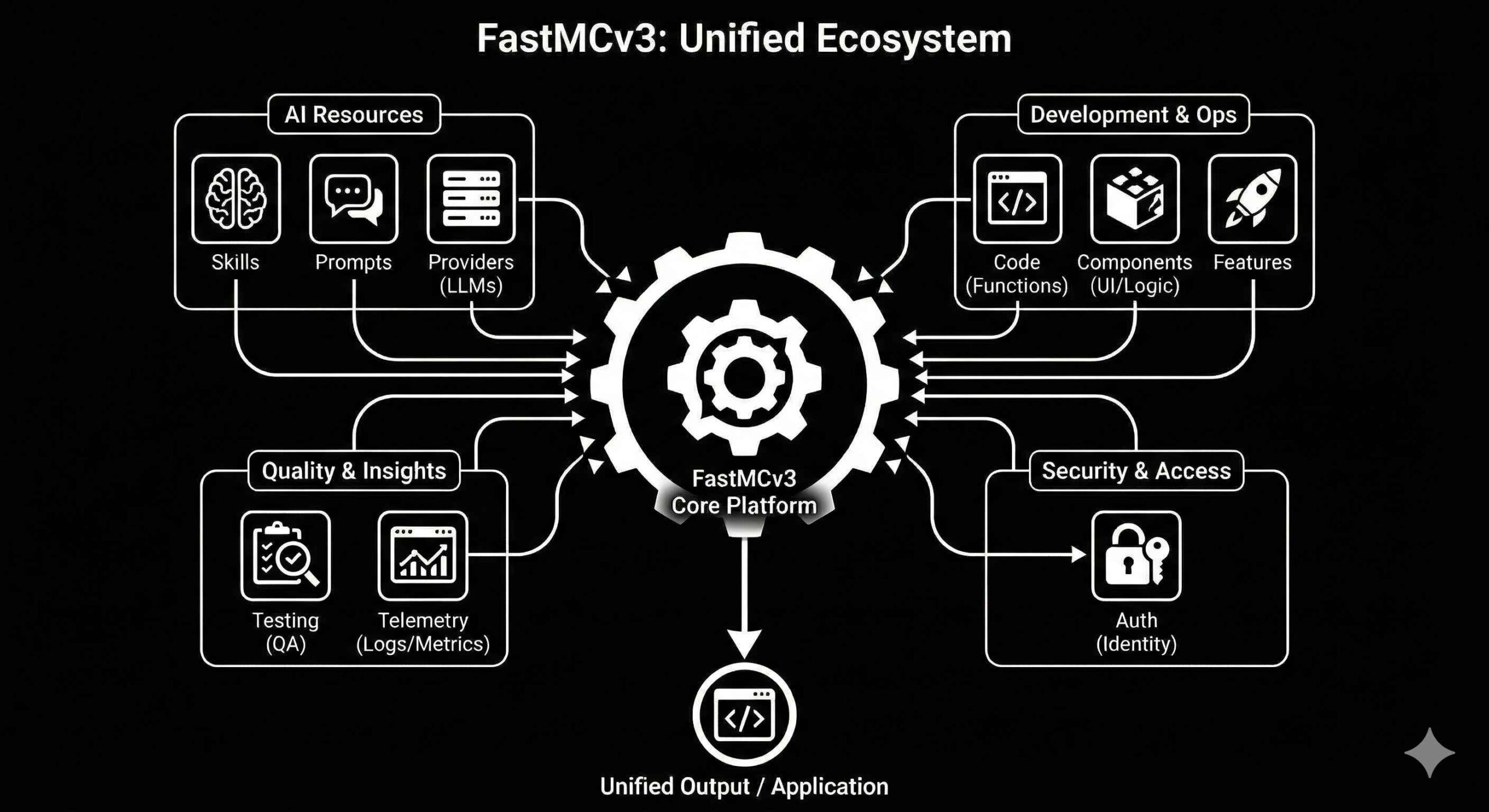

FastMCP 3.0 — Cuz we are efficiency junkies

It’s hilarious when you actually think “when was the last time you didn’t optimize?”. Last time you just sat there like “nah” just let prefer the long way. If you’ve been knee-deep in clients and agents like me. FastMCP (just dropped its 3.0 release, it’s got me overwhelmed. Kind of like Composio. Gone are the

-

The End of LLM Amnesia: What Google’s Titans Means for You in 2026

An era ends quietly. No press conference. No exploding X takes. Just a paper — Titans: Learning to Memorize at Test Time — dropped by Google Research in January 2025. Paired with the MIRAS framework in December, it rewrites the rules of how AI models actually work. Here’s the brutal truth: Every LLM you’ve ever

-

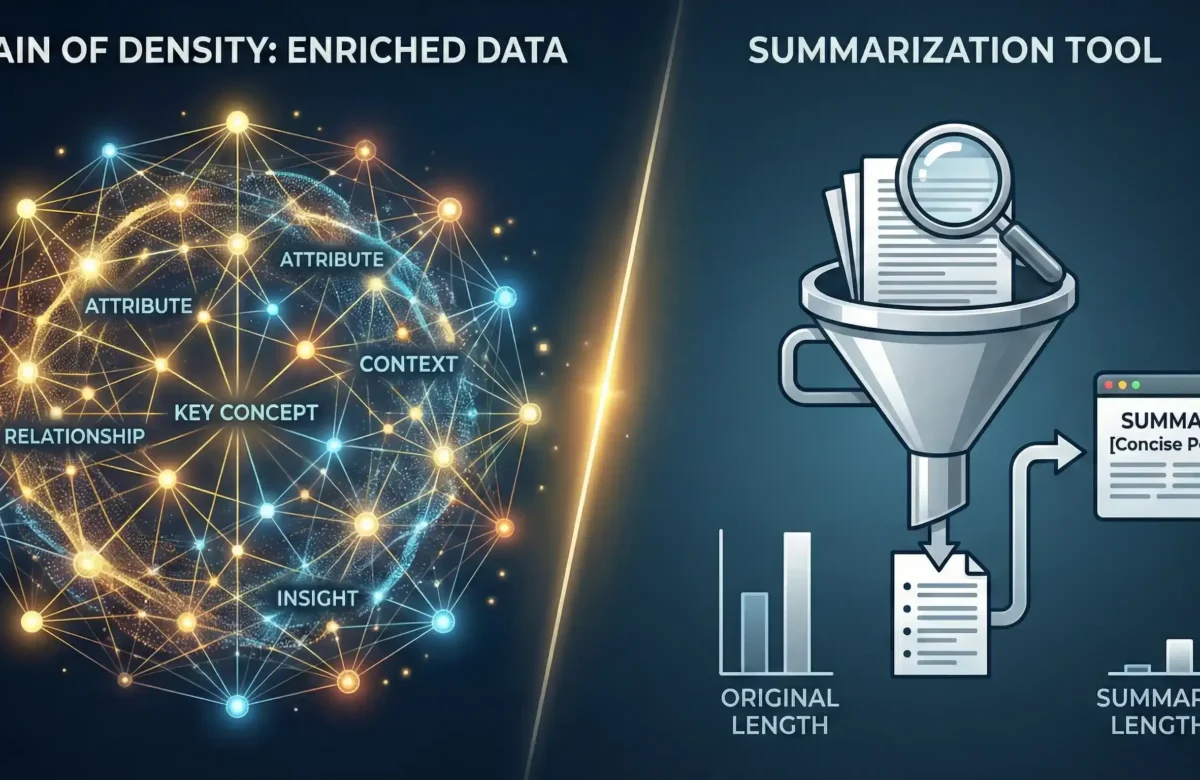

AI Memory Part 1: Chain of Density

Not a summarization trick — a fix to progressive information loss The Prologue From my very first interaction with ChatGPT in late 2023, I asked one simple question:“How do I optimize you?”That became my approach to every LLM. By June 2024, something broke in a useful way.Three different models started showing clear opinions—the mirrored traits

-

![AI Monthly Drop [Nov 2025]: 1.2 Million users showing mania or delusion](https://ktg.one/wp-content/uploads/2025/11/ai-intel-Drop-1200x780.png)

AI Monthly Drop [Nov 2025]: 1.2 Million users showing mania or delusion

Starting on down note, with ChatGPT revealing that 1.2 Million of it’s users show mania or delusion.. of course there’d be idiots that take everything it says to heart. (If you don’t use AI – u eventually will have to and just remember: THEY ARE MADE TO AGREE WITH U = except claude he says

-

![AI Monthly Drop [Sep 2025]: AI Breakthroughs: Why 1 Year = 10 Years Now](https://ktg.one/wp-content/uploads/2025/11/092025.png)

AI Monthly Drop [Sep 2025]: AI Breakthroughs: Why 1 Year = 10 Years Now

Every year in AI now feels like a decade. September 2025 proved the point—from physics-bending quantum processors to trillion-parameter language models, to AI ministers and browser wars. Here’s your full briefing so you don’t age out. Buckle up: we’re diving deep into the chaos, because skimming this means you’ll miss the plot twists that rewrite

-

BATTLE OF THE BOTS: Imprinting Competition for quality output.

September 10, 2025 I put five AI agents in a 30-minute web-dev cage match: Claude, Codex, Gemini, Qwen, and Grok. Each got a different Bootstrap template and the same time limit. By Kevin Tan ] [AI Anthropology | Team LLM | September 8, 2025 I had absolutely no faith this would work. I invented stupid